Where are the crawled URLS in webmaster tools coming from?

-

When looking at the crawl errors in Webmaster Tools/Search Console, where is Google pulling these URLs from? Sitemap?

-

Just to make complete. Google search console will list errors for pages with links coming from 3 general location

-

Crawling links on your website. Starting from somewhere on your site and going link to link.

-

Crawling links in your sitemap.

-

Crawling URLs from your site that do not exist anymore on your site or sitemap. I have seen Google keep things in memory and come back to hit pages again that are no longer from option 1 or option 2. If you used to have a bunch of 301 directs in place for an old version of your website and then your developer changes something to delete all those 301s and they become 404s, you will find those pages showing up as errors again. This is really useful as it can help diagnose the issue and you can fix it.

-

Crawling links from other sites. Sometimes, this is how links get crawled for #3.

Here is what really sucks about Search Console and I mean sucks big bananas if you are trying to diagnose an issue. If you look at your Search Console error page. You can click on the URL in the report, it will pop up a box and then you can click the tab "Linked From" and see what pages are linking to the URL in question. That is good! If you then download the CSV, all of that info is lost. If you have more than 20 errors to deal with, you do not have a practical way to manage things and see if there is a trend etc. Otherwise you are left with clicking a lot of links in the report and taking lots of notes and going a little insane.

Good luck!

-

-

I agree 100% with Dirk!

Google is going to crawl your site as long as your as long as you have a domain's robots.txt file and Meta tag robots allow for the bot to crawl the site. By not telling Google anything you are saying welcome to my site please index

Google Webmaster tools are doing you a favor and saying look this is a problem that the bot has encountered while indexing your site look into it.

Submitting a XML sitemap to Google will definitely help show them where to look and you can request that they index using crawl is a Googlebot.

Some good advice on how to fix the issues found

**https://www.distilled.net/blog/seo/indexation-problems-diagnosis-using-google-webmaster-tools/ **

a great resource on indexing & robots.txt

https://www.distilled.net/blog/seo/advanced-seo-troubleshooting-why-isnt-this-page-indexed/

https://varvy.com/robottxt.html

I hope this helps,

Tom

-

These emors are the problems the googlebot encounters while crawling your site. A site map can help the googlebot to better crawl your site but isn't strictly necessary .

rgds

Dirk

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Can you help by advising how to stop a URL from referring to another URL on my website with a 404 errorplease?

How to stop a URL from referring to another URL on my site. I'm getting a 404 error on a referred URL which is (https://webwritinglab.com/know-exactly-what-your-ideal-clients-want-in-8-easy-steps/[null id=43484])referred from URL (https://webwritinglab.com/know-exactly-what-your-ideal-clients-want-in-8-easy-steps/) The referred URL is the URL page that I want and I do not need it redirecting to the other URL as that's presenting a 404 error. I have tried saving the permalink in WordPress and recreated the .htaccess file and the problem is still there. Can you advise how to fix this please? Is it a case of removing the redirect? Is this advisable and how do I do that please? Thanks

Technical SEO | | Nichole.wynter20200 -

URL Inspector, Rich Results Tool, GSC unable to detect Logo inside Embedded schema

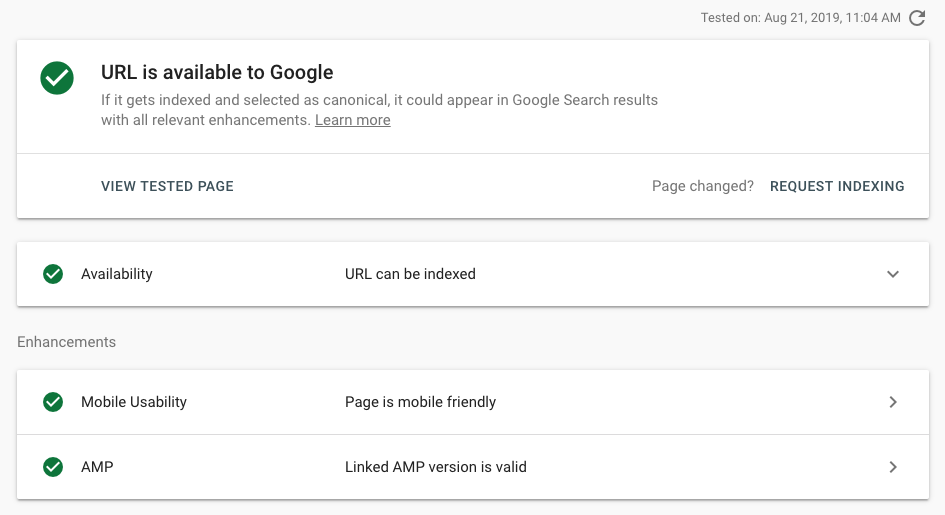

I work on a news site and we updated our Schema set up last week. Since then, valid Logo items are dropping like flies in Search Console. Both URL inspector & Rich Results test cannot seem to be able to detect Logo on articles. Is this a bug or can Googlebot really not see schema nested within other schema?Previously, we had both Organization and Article schema, separately, on all article pages (with Organization repeated inside publisher attribute). We removed the separate Organization, and now just have Article with Organization inside the publisher attribute. Code is valid in Structured Data testing tool but URL inspection etc. cannot detect it. Example: https://bit.ly/2TY9Bct Here is this page in URL inspector:

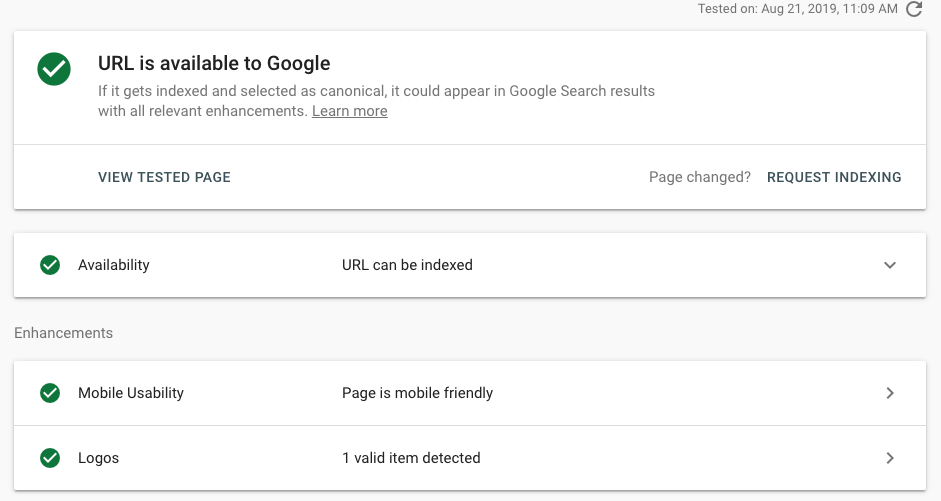

Technical SEO | | ValnetInc By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:

By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:  In pseudo-code, our OLD schema looked like this: The NEW schema set up has the same Article schema set up, but the separate script for Organization has been removed. We made the change to embed our schema for a couple reasons: first, because Google's best practices say that if multiple schemas are used, Google will choose the best one so it's better to just have one script; second, Google's codelabs tutorial for schema uses a nested structure to indicate hierarchy of relevancy to the page. My question is, does nesting schemas like this make it impossible for Googlebot to detect a schema type that's 2 or more levels deep? Or is this just a bug with the testing tools?

0

In pseudo-code, our OLD schema looked like this: The NEW schema set up has the same Article schema set up, but the separate script for Organization has been removed. We made the change to embed our schema for a couple reasons: first, because Google's best practices say that if multiple schemas are used, Google will choose the best one so it's better to just have one script; second, Google's codelabs tutorial for schema uses a nested structure to indicate hierarchy of relevancy to the page. My question is, does nesting schemas like this make it impossible for Googlebot to detect a schema type that's 2 or more levels deep? Or is this just a bug with the testing tools?

0 -

How Shold I Structure URLs for a Portfolio?

Hi Moz Community, My web design agency has a lot of different projects we showcase in the portfolio of our site, but I'm having trouble finding information on the best practices for how to structure the URLs for all of those portfolio pages. We have tons of projects that we've done in the same service category and even multiple projects we've done for the same company within that category. For example, right now things look like: www.rootdomain.com/portfolio/web-design/clientname which tends to get long, bulky and awkward, considering we do lots of projects in the web design category and might do a second project for the same company. How should we differentiate the projects from a URL standpoint to avoid having all of the pages compete for the same keyword? Does it even matter, given that these portfolio showcases are primarily image-based anyways?

Technical SEO | | formandfunctionagency0 -

Clarification on indexation of XML sitemaps within Webmaster Tools

Hi Mozzers, I have a large service based website, which seems to be losing pages within Google's index. Whilst working on the site, I noticed that there are a number of xml sitemaps for each of the services. So I submitted them to webmaster tools last Friday (14th) and when I left they were "pending". On returning to the office today, they all appear to have been successfully processed on either the 15th or 17th and I can see the following data: 13/08 - Submitted=0 Indexed=0

Technical SEO | | Silkstream

14/08 - Submitted=606,733 Indexed=122,243

15/08 - Submitted=606,733 Indexed=494,651

16/08 - Submitted=606,733 Indexed=517,527

17/08 - Submitted=606,733 Indexed=517,498 Question 1: The indexed pages on 14th of 122,243 - Is this how many pages were previously indexed? Before Google processed the sitemaps? As they were not marked processed until 15th and 17th? Question 2: The indexed pages are already slipping, I'm working on fixing the site by reducing pages and improving internal structure and content, which I'm hoping will fix the crawling issue. But how often will Google crawl these XML sitemaps? Thanks in advance for any help.0 -

Duplicate Meta Titles and Descriptions Issue in Google Webmaster Tool

Hello All, We have one site named http://www.bargains-online.com.au/ & have some categories along with filter option on left side like filter by price & by brand, ect. We have already set rel canonical tags on all filtered pages, but still those all pages showing duplicate page titles and description warning in HTML Improvements section in Google Webmaster Tool. For Example: http://www.bargains-online.com.au/pressure-cleaners.html We've set rel canonical tag on below pages. http://www.bargains-online.com.au/pressure-cleaners/l/manufacturer:black-eagle.html http://www.bargains-online.com.au/pressure-cleaners/l/price:2,100.html http://www.bargains-online.com.au/pressure-cleaners/l/price:3,100.html Kindly request if anybody has any solutions for the same, please share with us. Thanks, Akshay

Technical SEO | | akshaydesai0 -

Sitelink demotion not working after submitting in Google webmaster tool

Hello Friends, I have a question regarding demotion of sitelinks in Google webmaster tool. Scenario: I have demoted one of the sitelink for my website two months back; still the demoted sitelink has not been removed from the Google search results.May I know any reason, why this page is not getting removed even after demoting from GWT? If we resubmit the same link in demotion tool one more time, will it work? Can anybody help me out with this? Note: Since the validly of demotion exists only for 3 months (90 days), I am concerned about the same.

Technical SEO | | zco_seo0 -

301 an old URL with a ? in the URL?

I am redoing a site and the URL's are changing structure. The client's site was in magento and in the store they would get two URLs, for example: /store/categoryname/productname and /store/categoryname/productname?SID=dslkajsfdoiu947598whouieht983hg98 Do I have to 301 redirect both of these URL's to their new counterpart? Both go to the same content but magento seemed to add these SIDs into the navigation and Google has both versions in the index.

Technical SEO | | DanDeceuster0 -

Keyword Difficulty Tool

Hi Mozzers! Randfishkin just posted yesterday a very nice important and helpfull post, about keyword difficulty. I will be happy, if you can write here the metrics from reports of keyword difficulty, to know more about position of our website on SERP, and to know more what to engage if someone is ranking higher than me, with same metrics of the report of keyword difficulty. It would be very nice, if we talk on this topic here about keyword difficulty how to's. Thanks

Technical SEO | | leadsprofi0